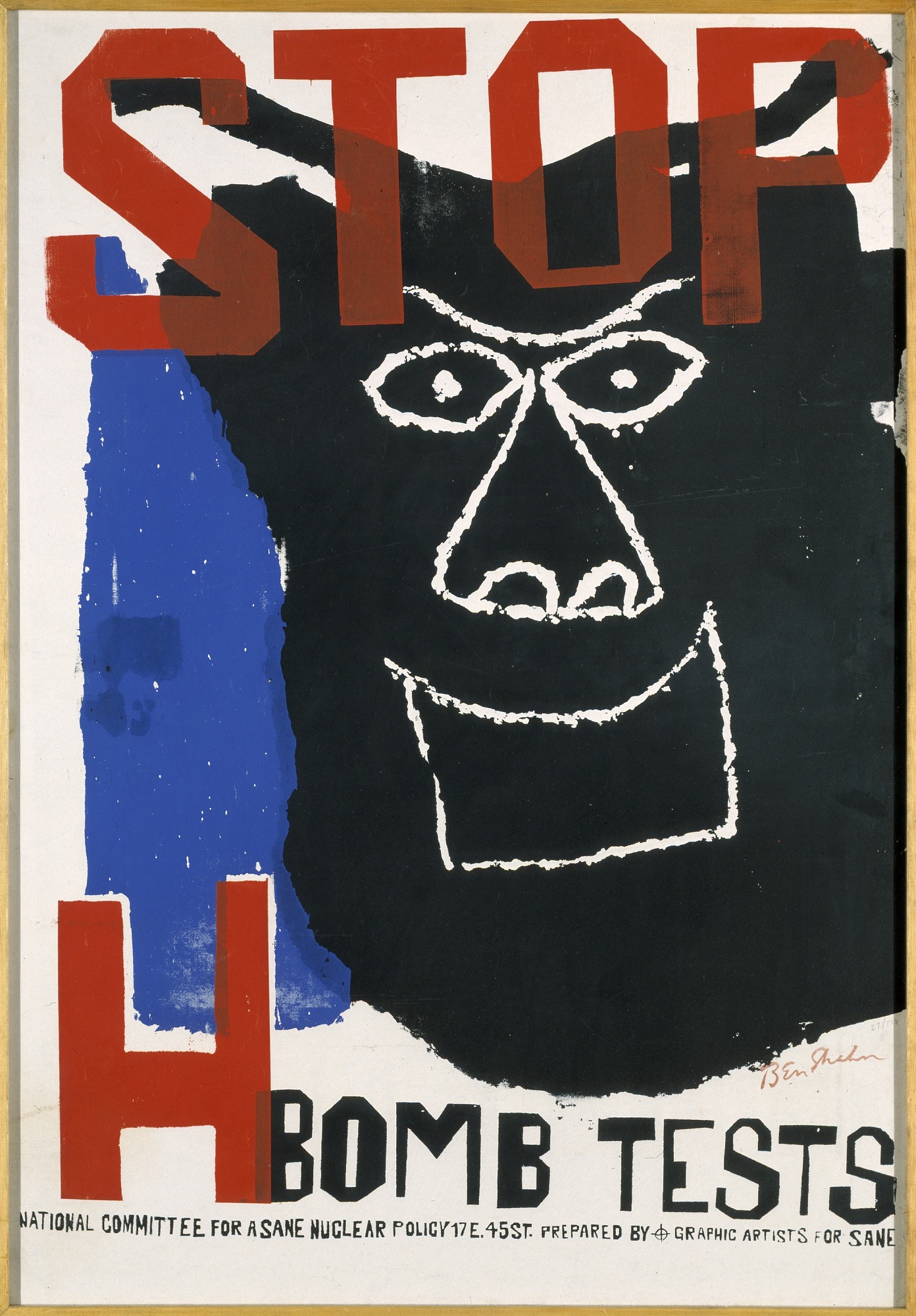

H Bomb Poster, 1960 © Ben Shahn | Smithsonian American Art Museum

I am writing this sentence during the sixth month of Putin’s war. If the worst happens, I’m aware that no readers will ever take in these ruminations. But assuming this fearful conflict, after painfully grinding on, ends with some kind of negotiated settlement, what then?

In the past, the endgame phase of wars have proven great teachers, the precious time when the human imagination and the human heart have been prised open in new ways. The just-ended horror has sharp edges that will soon be rubbed smooth by time, but while the gruesomeness remains fresh in the social memory, real change in what led to the slaughter can seem absolutely mandatory.

Immediately after World War I, in direct response to the unprecedented carnage that had destroyed most of a generation of Europe’s males, a mutation in the conduct of human affairs was attempted with the establishment of a first intergovernmental organization whose purpose was the maintenance of peace throughout the world—the League of Nations.

Its covenant enshrined guarantees of collective security; goals of disarmament; conflict resolution through arbitration; the protection of smaller nations and of minorities within nations; principles of consent of the governed, and the right of groups to “self-determination.” The League was a vision of Woodrow Wilson, the American president, and for it he was revered abroad and awarded the Nobel Peace Prize. Because one party to the conflict—Germany and its allies—had been defeated to the point of surrender, it seemed the way was clear for the leader of the winning side to propose a new post-war order. Yet Germany proved not to be the obstacle. Wilson was unable to get the United States Senate to ratify American participation in the League—an absence which ultimately proved the organization’s deathblow.

But the will to avert the destruction of war survived the hollowing out of the League’s purpose, and in 1928, fifteen nations signed the Kellogg-Briand Pact in Paris. The agreement did two things: it outlawed war as an instrument of national policy, and it bound signatories to settle disputes by peaceful means. Frank B. Kellogg was the U.S. Secretary of State, and Aristide Briand was the French Foreign Minister. Eventually, almost every established nation in the world signed the treaty, and the U.S. Senate ratified it by a vote of 85 to one. Kellogg was awarded the Nobel Peace Prize in 1929, but a year later Japan, a signatory, invaded Manchuria, and it was quickly apparent that the Kellogg-Briand Pact was unenforceable. Germany was another signatory, and by 1933, its way to war was clear.

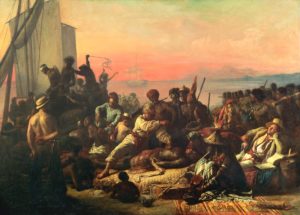

But not every post–World War I attempt to mitigate war’s horror crashed on the bulwark of “realpolitik.” The worst nightmare to unfold in the trenches of Northern Europe had been the mass asphyxiation caused by the widespread use by all sides of poison gas, the deployment of lung-destroying agents like chlorine and cyanide. Hundreds of thousands of civilians and combatants had died horrific, choking deaths on the spot of dispersal, and many tens of thousands of survivors suffered lasting respiratory and brain damage as a result of inhaling gas.

In 1925, a treaty was agreed in Geneva, hence the Geneva Protocol, outlawing all use of poison gasses, as well as “Bacteriological Methods of Warfare.” Thirty-eight states were the original signatories. The pact is still in force, and by now 146 nations have signed it, the most recent being Colombia, which ratified the treaty in 2015. Chemical weapons are still being developed and even deployed, and research into weaponized biology is still being conducted, but such agents remain illegal, and their use is exceptional. Their post–World War I legal interdiction stands as a milestone of human improvement.

The point of remembering the visionary leaps that were attempted in the wake of World War I is not, as is usually the case, to emphasize their failure.

Rather, it is both to marvel that such efforts were made at all, and to mark the possibility that they will yet bear fruit, if mainly as an example of what can be attempted.

After all, the dream of the League of Nations was carried forward in the mind of one of its first and staunchest advocates—Franklin Roosevelt: as a candidate for Vice President in 1920, he crossed the United States, giving more than 800 speeches in favor of the League. Twenty years later, in the earliest days of World War II, FDR advanced a future entity called “the United Nations” with a political savvy Wilson lacked. He made creative use, for example, of ambiguity about the proposed organization’s roots in the wartime anti-Axis alliance, also known as the United Nations. Roosevelt’s vision of a post-war international peacekeeping body was a corrected and far more realistic version of the League. As the shattering carnage of this second global conflict became clear from Europe to Asia, the longing for a new world order was more intensely and widely felt than ever. As the war drew to a close, that order’s moment had arrived.

The United Nations Charter was drawn up at a meeting of 50 nations in San Francisco. The session began on April 25, 1945, two weeks after Roosevelt’s death and two weeks before the surrender of Germany. The U.S. Senate vote on the UN. Treaty came within a couple of months, and in contrast to what befell Wilson’s plan, the accord was ratified with only two votes cast against it.

But what gave the new organization an unimagined urgency was what happened just as it was coming into being—the American use of atomic bombs against Japan in August. It is true that with the Axis powers soundly defeated, there was no inbuilt opposition to an initiative taken by the uniquely victorious United States, but the new weapon brought with it a transcending post-war urgency. An immediate and universal recognition of the unprecedented threat represented by nuclear weapons, even if monopolized by the Americans, resulted in control of the atom being the new international body’s originating order of business.

Indeed, the first resolution ever approved by the General Assembly, voted on January 24, 1946, proposed to “deal with the problems raised by the discovery of atomic energy.” The resolution created the UN Atomic Energy Commission, and defined its purpose as developing a program for “the elimination from national armaments of atomic weapons” and the use of atomic energy “only for peaceful purposes.”

The United States, as the sole possessor of the bomb, took the initiative in devising norms for the international control of atomic weaponry. For more than a year, an authentic impulse to actually accomplish such an outcome seemed to be underway. It began immediately after Japan’s surrender, when the U.S. Secretary of War, Henry Stimson, proposed in a memo to President Truman to head off, as he put it, “an armaments race of a rather desperate character.”

What worried Stimson was a world-threatening nuclear weapons competition with the Soviet Union, which had quickly morphed from essential ally to nascent adversary. “To put the matter concisely,” he proposed taking immediate steps “to enter into an arrangement with the Russians, the general purpose of which would be to control and limit the use of the atomic bomb.”

Stimson, no bleeding heart, had presided over the entire American war effort as well as the creation of the atomic bomb itself. He had ordered its use against Japan. But the elderly statesman was clear-eyed about what was suddenly at stake for the future of the human race. That prompted him, astonishingly, to propose that the United States immediately “share” scientific and industrial information about the atomic bomb with the Soviet Union. That meant surrendering the nuclear monopoly, and devising agreed upon international controls of all nuclear weapons possession and manufacture, which inevitably involved some surrender of national sovereignty. In Stimson’s own words, his aim was to save “civilization not for five or twenty years, but forever.”

Stimson’s memo was dated September 11, 1945, and to Truman’s credit, he put the radical proposal before his entire cabinet. Surprisingly, most of those war-hardened statesmen, including Dean Acheson, received Stimson’s view favorably—as did the Joint Chiefs of Staff.

It is important to recall that in the immediate aftermath of World War II, the shape of post-war antagonisms had not solidified, and powerful figures in the national security establishment were inclined to some kind of cooperative nuclear arrangement with the Soviets. Truman, doubting that Moscow could ever come up with a bomb of its own and loath to give up the American atomic monopoly, made no final decision at that point. But an unforeseen open-ended process of seriously dealing, in the UN’s phrase, “with the problems raised by the discovery of atomic energy,” was begun.

Especially once the new United Nations General Assembly made international control of the atomic bomb and its eventual elimination a priority, attempts were made to create a structure of such agreement. In November, Truman convened a meeting in Washington of the Prime Ministers of Canada and the United Kingdom, and they seriously entertained proposals for sharing atomic information, establishing inspections regimes, and dismantling existing bombs—all under the auspices of the UN, and including the USSR. In subsequent months, the United States and the Soviet Union dueled over the terms of the negotiation. The idea of short-term nuclear abolition fell away, but the issue of international controls on the atomic bomb, centered in the UN, continued to be debated. Washington kept the initiative, leading to the Acheson-Lilienthal Report of early 1946 and the superseding Baruch Plan, which was presented to the UN late in the same year. Presiding over the Baruch Plan was Bernard Baruch, a Wall Street mogul and one-time intimate advisor of Woodrow Wilson. Baruch, as events would show, shared Wilson’s trait of impatient moralizing.

These attempts at constructing a system of international control for the atomic bomb are understood to have failed because they all required sweeping internal inspections that Moscow with its closed society refused to entertain. More damaging still were the plans’ assumptions that America’s exclusive custody of existing bombs—“trusteeship”—would continue; that the Security Council veto would not apply to questions having to do with atomic energy; and that the plans’ provision that any nation in violation of the agreement would be subject to a UN-ordered atomic attack. In effect, a noncompliant Moscow would be threatened with a UN-sponsored armageddon.

It can seem naïve in hindsight to imagine that Joseph Stalin would have accepted any plan forbidding him to develop a Russian atomic bomb, but in 1946, well before Russia’s capacity to actually manufacture such a weapon was evident to Stalin or anyone else, there were real benefits to Moscow of international controls restraining Washington’s singular dominance. In the event, the proposed “controls” as they emerged in the early-round negotiations were steadily tilted America’s way, but the pressures for compromise continued to be felt by both sides. Moscow wanted to continue the bargaining. But Bernard Baruch, aware of Moscow’s outstanding objections, insisted on a premature take-it-or-leave-it vote. The Baruch Plan failed at the UN on December 31, 1946. “And so on the last day of 1946,” the historian Daniel Yergin wrote, “any genuine effort to head off a nuclear arms race came to an end.”

But again, the point of this review is not the usual one—a realpolitik emphasizing the failure of a romantic post-war attempt at nuclear arms control, and even an attempt at the elimination of nuclear weapons before they began their mushroom-like multiplication. Rather, the point of this reappraisal is to remember the all-but-forgotten first efforts of a bevy of hard-nosed “realists” to bring about such an “idealistic” accord in the first place.

And I do mean forgotten. I had a close-up brush with that amnesia when, in researching House of War, I asked both Arthur Schlesinger and Robert McNamara what they made of the Stimson proposal to “share” the atomic bomb with Moscow, and neither of them had ever heard of it.

Those efforts to immediately erect guardrails around nuclear weapons, given the sorry history that followed upon their falling short, deserve to be lifted up and celebrated as examples of what can follow in the chastened aftermath of a disastrous war.

Or a near war.

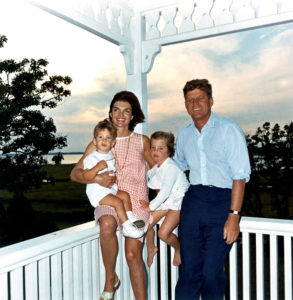

The Cuban Missile Crisis of 1962, when the United States and the Soviet Union came within a hair’s breadth of launching the global spasm of destruction, ignited a widespread fever of terror that in turn sparked the first serious effort at arms control since the Baruch Plan failed. By the time John F. Kennedy had taken office as president in 1961, the U.S. nuclear arsenal had grown to nearly 20,000 bombs and missile warheads—most of them genocidal thermonuclear weapons—H-bombs.

For Kennedy, the October Missile crisis was an epiphany, and he acted on it. A few months later—in June of 1963 at American University in Washington, D.C.—he addressed a plea directly to the Soviet people: “For in the final analysis, our most basic common link is that we all inhabit this small planet. We all breathe the same air. We all cherish our children’s future. And we are all mortal.” The Soviet Premier Nikita Khrushchev seems to have heard the plea as if addressed directly to him. He ordered Soviet media to broadcast recordings of the speech—the first time Russians heard the voice of an American president.

But in addition to the leaders and people of the adversary nation overseas, Kennedy was speaking to the killer bureaucrats in the Pentagon and his own administration—none of whom had seen the text of his American University speech before he delivered it. “Some say,” he went on, “that it is useless to speak of world peace or world law or world disarmament.” But Kennedy was saying it was urgent to do so. Indeed, in early Cold War Washington, the word “disarmament” had been discarded as dangerously naïve, but in this speech Kennedy brought it back, and he proposed “a series of concrete actions and effective agreements” that could make it real. In particular, he called for an immediate start of negotiations on a treaty to outlaw the nuclear tests which were already poisoning the atmosphere.

This post-Cuba initiative of Kennedy’s bore fruit at once. Within days, the United States and the Soviet Union began negotiating what became the Partial Nuclear Test Ban Treaty of 1963, agreed to less than three months after Kennedy’s speech. That treaty committed, as the text declares, to “the speediest possible achievement of an agreement on general and complete disarmament under strict international control.”Only three months later, Kennedy was murdered, but an unprecedented regime of nuclear arms control negotiations—explicitly defined as aiming at “disarmament”—was established, and it would survive for many years. It led to the Nuclear Non-Proliferation Treaty of 1968, and to the Anti-Ballistic Missile Treaty of 1972, both of which committed to “achieve at the earliest possible date the cessation of the nuclear arms race and to take effective measures toward reductions in strategic arms, nuclear disarmament, and general and complete disarmament.” Indeed, despite the ways in which the arms race continued almost unabated for more than two decades, the turn that Kennedy took after the nightmare of near war over Cuba led, in the era of Ronald Reagan and Mikhail Gorbachev, to the nonviolent end of the Cold War.

James Carroll is the author, most recently, of The Truth at the Heart of the Lie.

One thought on “Part 4: Moments of Moral Reckoning after Wars End”