Photo Credit: j_rueda/Shutterstock.com

_____

As America looks cautiously toward economic “reopening” post-pandemic, most official prescriptions tend to share a fundamental premise: rebounding to pre-pandemic G.D.P. growth and pursuing the promise of new employment opportunities in the private sector.

President Biden’s “Build Back Better” climate and economic recovery plan has been geared toward putting Americans back to near-full employment. “When I think of climate change,” Biden says, “the word I think of is jobs.”

“More jobs” is a familiar political rallying cry, the seemingly perennial answer to America’s chronic economic woes. Yet as the pandemic and resulting shutdown shed light on the kinds of work most essential to society, this premise appears questionable. One of the lessons of the Coronavirus, after all, is that a significant share of private sector work proved “non-essential,” so why must “building back” to the pre-pandemic status quo be so desperately important?

The assumption that growing private sector employment is always socially beneficial has guided economic policy at least since recovery from the Depression in the 1930s. Yet today it elides the simple fact that, over the past four decades, an increasing share of the work Americans are employed to do has contributed little or nothing to maintaining societal living standards. The prevailing economic system induces work in many activities—from privacy-infringing advertising to perniciously addictive attention platforms—that generate little or no social value. But coming to grips with the hidden costs of unnecessary work, we can begin to unlock new opportunities.

This shift over the last four decades away from manufacturing into the much-celebrated “information economy” and less glamorous retail and service jobs is generally held up as the pinnacle of economic development. But there is ample reason to doubt such self-laudatory triumph. It is by now a familiar fact that average wages have not risen alongside the cost of living since the late 70s. In addition to widening income inequality, the actual types of work Americans are employed to do has shifted dramatically, leading to subtler problems.

Beginning in the Reagan era of deregulation, the share of the U.S. workforce in “goods producing” sectors like mining and manufacturing, agriculture, transportation and utilities declined from 34.5 percent in the late 1970s to 19 percent in 2019. Some of what replaced those jobs has been socially vital—though often under-paid— work in nursing, home health, and teaching. But between 1979 and 2019, the fastest-growing employment categories were the FIRE (finance, insurance, real estate) and non-governmental services. Combined with the retail and wholesale fields, such work now occupies nearly half (46.9%) of the American workforce.

Rather than further liberating society from work, the “post-industrial” shift brought with it bloated corporate competition and inefficient distribution arrangements which contribute little if anything to maintaining societal living standards. Under the guise of economic dynamism, less socially valuable work was welcomed in.

Even before the pandemic, polls in the US, UK and elsewhere in Europe found that 20-40% of workers in these “post-industrial” economies did not believe their job made a “meaningful contribution to the world.” Such feelings, while subjective, are not unwarranted. As the late David Graeber argued in his 2018 book Bullshit Jobs: A Theory, the conspicuous rise of the office job has been attended by a rise in “pointless work.” Collecting the testimonies of disaffected employees, Graeber described a regime of corporate make-work, wherein less-than-necessary tasks are performed mostly to aggrandize the manager on their way to scoring the next promotion. He dubbed this dynamic “managerial feudalism.”

Declaring as much as 40% of the work performed by Americans bullshit, Graeber hoped Bullshit Jobs would be “an arrow aimed at the heart” of the new work regime. But his argument was met by the establishment, by and large, with a shrug. “Graeber has written an amusing essay,” one response in The Economist sneered, involving “lots of people doing meaningless tasks they hate.” Economic “disaggregation,” it confidently reaffirmed, “may make it look meaningless,” but ‘[t]his complexity is what makes us rich.” Unfulfilling as contemporary work may feel, the mainstream line assures us that current system has nevertheless paid off, at least in this country.

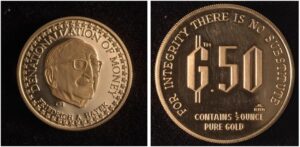

Despite its common-sense pretense, such a defense of economic “disaggregation” overlooks two important points. The first is the extent to which gains from labor-saving technological advances have been squandered since the “Keynesian bargain” of the post-war era. The decline of manufacturing in richer parts of the world is typically explained by way of offshoring to the developing world in the hunt for cheaper labor. But this popular explanation, while true in part, obscures the fact that total manufacturing output in “post-industrial” economies has actually increased since the 1970s, even as the share of manufacturing-based employment declined—in the US, for example, from 22% in 1970 to just 8% between in 2017. As Aaron Benanav demonstrates in Automation and the Future of Work (2020), manufacturing output more than doubled in the same period in the US and other “post-industrial” nations. In short, he concludes, “more and more is produced with fewer workers.”

John Maynard Keynes, the economist recalled as an architect of that post-war consensus, famously predicted his grandchildren would work a 15-hour week. But despite the fact that technology has rendered human labor less necessary to maintaining living standards, capitalism’s “labor-saving” innovations have failed to yield increased leisure time since the 40 hour workweek became standard. On the contrary, most signs indicate that pre-coronavirus, Americans had been working more hours than ever—by one estimation, the average workers’ schedule added 199 hours a year between 1973 and 2000—while facing increasing precarity in employment.

As it turns out, that crucial turning point at the end of the 1970s has brought not only a foreclosure on real wages, but on workers’ free time and ability to relax, as well. This leads to the second flaw in the disaggregation defense: the fact that many of capitalism’s more recent “innovations” actually increase the socially necessary labor-time required for goods to be distributed and consumed.

The insight that capitalism’s so-called “labor-saving” inventions do not on their own reduce the collective time a society works is an old one, attributable to Karl Marx. In Capital, he noted how the inventions of the industrial revolution served merely to speed up work-processes as they proliferate via market competition. Marx’s systematic insight helps explain the inefficiencies wrought by the unprecedented scale to which competition has grown in the era of multi-national corporations, fueling the bullshit jobs boom.

Advertising is the most prevalent example: large corporations have driven the US to become far and away the largest advertiser per capita in the world. The average marketing budget reported by the largest US companies accounts for more than 11% of their annual budgets. Hundreds of billions of dollars and the work of hundreds of thousands of workers are expended every year in this mutually-exhaustive struggle for marginal market share—think Pepsi vs. Coke, Apple vs. Android.

A whole range of similar practices—sales and marketing, consulting, the tireless variation of product and service “innovation”—all sap society’s collective labor and resources. Crucially, such practices can all be rationalized for each individual firm on the promise of short-term market advantage. But as intermediary agencies, consultancies, and service platforms hook whole industries of competing firms on some novel practice, cottage industries are spun out and normalized, complete with jobs to attend them. Lucrative as such fields may be, whoever aspired to grow up and go into ad-tech, or become a “Salesforce Administrator”?

Obviously, competitive practices like advertising are not new. But the sheer proportions of mutually-exhaustive make-work in recent decades marks something new in the development of capitalism. Having extracted surplus-value from productivity-enhancing machines and processes for decades, capital increasingly exploits a range of practices totally extraneous to production. And the costs of this arms-race mentality must invariably be passed on; both as wage-earners and as consumers, working people ultimately foot the bill for runaway corporate competition. In more ways than one, society in such a system pays to have things marketed to itself.

And yet, the economic orthodoxy which guided us here appears unable to acknowledge these costs, let alone is it equipped to offer solutions. The textbook economic “law” of efficiency may state that, in perfectly competitive markets, market forces are supposed to “punish” inefficient sellers in the long run. But with the “free hand” of the market effectively bound by near-full employment policies, work is induced which generates little or nothing that people, as opposed to markets, actually value. The privileging of economic growth for its own sake has come with increasing disregard for the actual social value and content of these “employment opportunities.”

It bears reminding that, given the current employment paradigm, full employment certainly remains preferrable to mass unemployment, and a federal jobs guarantee remains a smart near-term policy goal. But tallying the true social costs of hyper-competition and convenience-driven innovation, the old drum-beat of “more jobs” and “employment opportunities” is rendered inadequate. To pretend that the reproduction of society today requires the same amount of labor as in the 1930s reinforces an artificial sense of scarcity in one of the richest countries in the world.

Such a system is not only unjust, it is demonstrably uneconomical—and in the context of global climate change, dangerous. A deeper reorientation in our thinking about work will by no means be simple: conventional economic indicators like G.D.P., financial markets, and employment figures would likely all take a hit. But with a full reorientation around socially useful versus wasteful labor, the excesses of work could be reined in, unleashing the resources and talents currently spent in frenetic competition and app-based servitude.

Technological advances now enable us to reduce our reliance on superfluous jobs without putting livelihoods at risk. From this vantage, uncoupling livelihoods from employment no longer appears as a utopian ideal, but a necessity for breaking out of capitalism’s artificial scarcity. Raising the “floor” of basic purchasing power—whether through a universal basic income (UBI) or through the decommodification of essential services like housing and health care—should be the goal.

Abundance, as Benanav writes, “is not a technological threshold to be crossed,” but rather “a social relationship” to be realized. With a new politics surrounding work and its organization, the unprecedented capacities of the twenty-first century can finally be redirected—be it toward green work, social care, or collectively reclaiming “time for what we will.”

_____

Jared Spears is a writer whose work has recently appeared in Futures of Work, Jacobin, and elsewhere on the web.