Image credit: Verso

Our world is increasingly mediated through algorithms and we place significant trust on their decisions. Justin Joque’s latest book Revolutionary Mathematics: Artificial Intelligence, Statistics, and the Logic of Capitalism explores how the transformation of statistical methods that underpin algorithms became the organizational backbone for contemporary capitalism.

This change in the production of knowledge and the extraction of value was not merely technical, but also deeply philosophical. Joque traces the revolution in statistics and probability that has quietly underwritten the explosion of machine learning, big data, and predictive algorithms that now decide many aspects of our lives.

Lydia Nobbs: Tell us how you came to write this book.

Justin Joque: I had been reading the media studies literature about algorithms and algorithmic society, and what stuck with me was that algorithms often don’t work. To give a local example, in 2013 Michigan created the Michigan Integrated Data Automated System (MIDAS), an algorithm to manage the state’s unemployment insurance. It was supposed to automatically detect fraud and it just didn’t work. In fact, it kicked 34,000 people off unemployment benefits, falsely accusing them of fraud. There was no way to appeal these decisions, and some people lost unemployment insurance for three or four years and were put in incredibly precarious situations. They couldn’t wait six years to go through the courts and get it sorted out.

So, I am interested in what algorithms are doing, but I’m also interested in a social-juridical question: How do algorithms get this power that we then must respond to? If you lose unemployment insurance and can’t buy food, it doesn’t matter if the algorithm is right or wrong. How do algorithms create this reality?

And I have also always been interested in Marxist ideas of objectification, and the ways that markets create realities. It seemed there were similarities at play.

LN: Could you explain to our readers what you mean by objectification, and how algorithms function through that?

JJ: Marx talks about objectification as a metaphysical process: how objects come to account for our affairs. It’s less about treating people like objects and more about objects becoming like people, having this metaphysical power to make decisions for us. You can see that in algorithms too: whether it’s the way Amazon ranks or recommends things, or how credit scores work, it’s very similar. All these systems create realities that no amount of belief or critique can unsettle.

By metaphysical, I mean ways of thinking that govern physical systems. Value or price—they don’t exist in a physical way. A chemist can’t “discover” the price of some good. There is nothing physical that has “price” in it. Or take probability. Outside of quantum mechanics, we don’t really know what probability is in a physical sense. Say there’s a 70 percent chance of rain tomorrow, but it either rains or it doesn’t. There isn’t a probabilistic event that is physical.

So, this is what I mean by metaphysical. I don’t want to call these systems ideological, because they’re intellectual supplements to the physical world. They allow us to think and do things. And I don’t mean to say they’re imaginary. They are real, but not material, not physical.

LN: And it doesn’t matter if people believe in them, they function anyway.

JJ: Exactly.

LN: You write that your work isn’t to pull back the veil, you’re not doing the work of dereification. You walk your reader through the “infidel mathematicians” of calculus to highlight mathematical foundations that are not wholly grounded in reason. And yet, calculus works anyway. Can you distinguish between dereification and your goal for this book?

JJ: There is a standard Marxist or leftist aim of dereification. Explicitly or implicitly, the goal is to get to ‘what’s really happening.’ Then, we can fix things, or have a one-to-one correspondence between the real world and our understanding of it.

Metaphysical supplements like price, value, and probability are productive. There is no complete access to the real, material world. We require abstractions to think about labor, to move goods around, to live in a society. To imagine a world without them is nonsensical.

So, I’m not trying to get to the ‘real’ underneath things but to imagine other ways that ground could be constructed. As I say in the book, “another objectification is possible.” If there is a task of dereification, it’s only an aid in reifying things in different, more just, and equitable ways.

LN: What would make “another objectification” possible?

JJ: That’s a whole other book! It’s not something that can be rationally approached. It must be done both on the concrete level, and in individual or group struggles, as ways to imagine something different. In my first book, I worked with deconstruction and Derrida. I’m still taken by this Derridian idea of the democracy “to come”: the unknowable and perhaps “never-arriveable” future. But it requires openness to think that way.

LN: You have a clear-eyed view of the improbability of revolutions that will overcome capitalist and statistical determinism. How should we think about that against the context of, say, thousands of people cut off from unemployment insurance?

JJ: A key point of the book is showing how systems regulate the relationship between the specific and the universal. So, sites of imagination and struggle involve both of these. My claim is not that we have to reimagine value, or individual struggles, whether it’s for racial justice or trying to get insurance. It’s not that one exists and determines the other: they’re mutually constitutive.

LN: We’re increasingly aware that algorithmic outputs are biased, sexist, and racist, reproducing injustices from the data on which they’re built. You’re certainly not ignoring these findings; you draw on and credit these researchers. Yet, your work also seeks to do something different.

JJ: The work you describe is incredibly important. I’m trying to add to the conversation by showing how what’s at stake is plugged into larger systems. Algorithms are ultimately doing the work of political economy, so I’m interested in demonstrating how algorithms produce value.

LN: We hear a lot about data “as the new oil.” But it’s not necessarily clear how vast volumes of data produce value, or at what stage we can consider it knowledge. How does your book deal with the relationship between data and value?

JJ: It’s an important question and there are two parts to it. One surprising element of mid-twentieth century statistics is someone like Leonard Savage, a leading Bayesian statistician, saying that we long thought that the point of knowledge production was to determine truth value. But really, it was about which opinions are worth something. There’s a shift in statistical approaches from one driven by what we traditionally think of as knowledge—knowing ‘the truth’—to a more economic approach. That shows what’s always been the case but admits it in a more clear-eyed way: knowledge production is always tied to political economy. In fact, the ways knowledge is produced and circulated can’t be divorced from economics and the political economy at stake.

And the other part is that we’re seeing a big shift in data itself: collections of huge proprietary data sets, and the rise of platforms like Amazon and Facebook for making decisions. Before, lots of data production was financed by the state, through censuses. In the book, I talk about the enclosure of the general intellect: decisions are now made from increasingly locked-down information stored in privatized platforms.

And more than ever, value extraction systems are also adversarial. If they’re founded on shared data sets, the ways markets become adversarial are based on outcomes, on what you can extract. With enclosed datasets, it’s more efficacious to dissimulate and obfuscate knowledge. One can produce more value and profit by creating fakes. We see it in disinformation, and in replication crises in the sciences.

LN: You mentioned that our previous understanding of statistics was closely related to the idea of truth, but we have now disregarded those traditional epistemic approaches. Instead of causation, it’s now much more about correlation. Could you expand on this distinction?

JJ: Sure. For example, Ronald Fisher is a big name in the history of statistics. In the early 20th century, he produced what we’re taught in all of the Intro to Statistics classes. We have a hypothesis, gather data, and determine the likelihood that the conclusion is due to chance versus some causal mechanism or treatment difference. But there’s always a possibility that what you observed was due to chance rather than a causal mechanism.

In other words, once you start thinking about science in a probabilistic way, there’s always an infinitesimal reminder you might be wrong. Fisher accepted the possibility that we don’t ever know the hypothesis is true, but we continually gather evidence against the possibility it was just due to chance. However unsatisfying it is, you never know something for certain.

Jerzy Neyman and Egon Pearson took a different, economic approach. They said: “If 0.5% of the time we might be wrong, we’ll calculate the cost of being wrong.” So, a steel mill guarantees their steel up to certain tolerances or quality, saying “we’re 99% sure the steel is up to the quality that we’re selling it at.” And then they calculate that 1% chance into their loss models.

Intellectually, this approach grounds itself more strongly when it shifts from knowing the truth to making economic calculations within the acceptable percentage of being wrong. This carries into Bayesian statistics, seeing probability as a subjective, positional thing. It really has to do with economic actors just trying to make the best decision they can, given the knowledge they have.

LN: Right, we’re configured into economic actors.

JJ: Yes. Hyper-rationalists really glommed onto Bayesian statistics. A group called Less Wrong wanted to integrate Bayesian statistics into an ideal of humanity, as the height of rationality, in sometimes even obscene and problematic ways.

LN: You make an important point that algorithms and their outputs tend to get ascribed as being “the truth.” And for many, terms like “k-nearest neighbors” or “naive Bayes classifiers” are intimidating, adding mystification and awe around AI. It seems there’s political work being done in highlighting that the outputs algorithms produce don’t necessarily have a truth value in the ways we understand meaning and truth.

JJ: Neyman and Pearson tried to add to Fisher’s work, but he reacted very strongly to that: the three of them spent decades attacking each other in the statistical literature. Fisher was uncomfortable with their economic approach, preferring the idea of the ‘heroic scientist’ who toils in their laboratory and finds knowledge on their own.

And so, I completely agree with you. There’s mysticism in the tech industry’s hyping of AI. But also, there is real discomfort with shifting knowledge in certain quarters—like Savage says—based on value rather than the ability to know the truth. It happened as early as Fisher’s response to Neyman and Pearson.

To my mind, one of the most dangerous things about our present moment is shifting, market-optimized truth, and then, our tendency to read eternal truths back onto them. In other words, momentary correlations are read as evidence of eternal, and often problematic, truths.

Obviously, this isn’t the whole story, but I think there is something in this that explains the return of race science and eugenics, and thinking about gender and sex in rigid ways. We are seeing new investment in these discourses, and it is very troubling that momentary correlations are being given such enduring weight.

LN: Could you expand on the parallel between how we apply the idea of universal truth to individual situations?

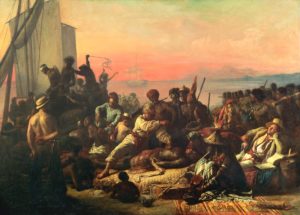

JJ: Here, I draw on Moishe Postone, who talks about the relationship between concrete and abstract domination. Concrete domination is individual acts of violence, often along racial and gendered lines. They’re then laundered through the market and optimized, because capitalism finds new ways to reinscribe old dominations and extract value.

You see the same thing with algorithms. An example is YouTube as an engine for radicalization. You click on something and next it starts recommending neo-Nazi propaganda, white supremacist, or anti-trans videos. It’s not because the engineers wanted that, but they’re trying to optimize for profit. The algorithm figures out that it can draw people into watching something more extreme, and the profit motive for YouTube and reactionary content are now in a feedback loop. One is profiting off the other, then re-entrenching it.

LN: You write that you’re not taking a Latourian approach to object analysis, but in some ways, the object has some power in this feedback loop. It wasn’t the intention to create extremist platforms on YouTube, but something about the created object has that power.

JJ: You get at an important point that draws us back to objectification. I think Marx does a better job than Latour at understanding this. Marx understands that it’s not mystical objects deciding things on their own, but that we’re taking social relations and encoding them into objects. I think Latour only gets it half-right. He misses the ways objects are already social relations that have been encoded. Objects maintain social relations for us. Similarly, the social relations of domination, white supremacy, and imperialism are encoded into the algorithm.

LN: There’s a debate about the degree to which humans should be the ultimate object of inquiry. Latour is probably closer to a posthuman approach, or Jane Bennett, who thinks through the agency of objects. But if you’re seriously challenging something like white supremacy or, say the invasion of sovereign nations, then perhaps it doesn’t make sense to exclude the human as our locus of analysis.

JJ: I need to think more about that. I’m not necessarily committed to old-school humanism because there’s nothing sacred about the human. But it’s less a question about human versus object than the relationship between objects and processes. The main problem I see with Latour is less his posthumanism, but the solidity he attributes to objects. There’s something material about social relations, and there clearly are material things that matter. But Latour and object-oriented ontologists focus too much on solidified things. They move from solid object to relations, whereas the Marxist view of objectification starts with social relations, then shows how they produce this solidified object.

LN: You’re quite careful in your book to ensure your piece isn’t read as anti-science. Does our current moment require this emphasis?

JJ: I don’t think I’m necessarily worried about the present moment. I have more of a personal commitment to science, math, and statistics. It comes back to the idea that another kind of objectification is possible. These processes are capable of producing knowledge. Savage and the Bayesianists’ important, powerful discovery is that statistics is really a subdiscipline of economy. It allows us to say that things that appear as crises of the sciences, disinformation, or knowledge production, are at their root crises of capitalism and capitalist production.

My point is what really needs to change are the systems of political economy in which these things function.

LN: Which, as you said earlier, is a future that may never arrive. Optimism seems expensive, but hope, or remaining open to possibilities, is worth holding onto.

JJ: One of the things I want to leave people with is that statistics is an open philosophical field. There’s obviously complicated math in probability and calculus. But it’s also clear that statistics contains large metaphysical questions. Regardless of one’s comfort levels with mathematics, it’s not so hard to dive in and start asking philosophical, political questions about what it means to say some event is ‘probably’ going to happen.

I hope people read the book and feel this is a debate they can engage with regardless of how they feel about math.

Justin Joque is the visualization librarian at the University of Michigan researching philosophy, technology, and media. He is the author of Deconstruction Machines: Writing in the Age of Cyberwar and Revolutionary Mathematics: Artificial Intelligence, Statistics, and the Logic of Capitalism.

Lydia Nobbs is a Politics PhD student at The New School for Social Research researching the political economy of voice recognition AI.