The day before the 2020 election, I was talking to Maine Democratic Senate candidate Sara Gideon’s ideal voter. She was a woman, a Democrat, and from outside Portland, a city so progressive that on November 3, voters passed five out of six resolutions proposed by Southern Maine Democratic Socialists of America. Going down my list of questions, I asked if she planned to vote a straight Democratic ticket. “I’m definitely voting for Joe Biden, no question,” she hesitated. “But I want to give [incumbent Republican] Susan Collins another chance.”

That wasn’t what the polls were saying – but could the polls be wrong? Outlier Quinnipiac had Gideon up by 14. The poll that came closest to what I was hearing was the Bangor Daily News, which had her in a statistical toss-up that Gideon would win with ranked-choice voting. Most gave Gideon a comfortable lead on the eve of the election. The AP had her up by six, while Nate Silver at FiveThirtyEight, whose forecasting system relies on collecting, averaging, analyzing, and adjusting other people’s polls, gave Gideon a 2 point lead, and a 60% chance of winning.

Such conversations led me to believe that ticket-splitting, which has decreased dramatically as our politics have polarized, was going to be a problem for Gideon in this sparsely populated, northern New England state. Sadly, the polls were wrong and my conversation with the woman who seemed like the perfect Gideon voter was an omen: Collins won by over eight points, even though Biden snagged almost 53% of the popular vote.

So, what’s wrong with political polls? One answer is: nothing that we don’t know already. Decisions pollsters make shape the data they collect, and this year may have been particularly difficult to forecast since what worked for presidential candidate Joe Biden failed numerous down-ballot Democrats. But knowing that polls can differ, or be flat out wrong, doesn’t quash the howls of outrage when they are.

Why? Because in addition to helping campaigns learn what issues the electorate is most likely to respond to, polls satisfy a deep-seated human wish to know what the future holds.

Polls are almost as old as the American party system itself. The earliest occurred in Wilmington, North Carolina during the election of 1824. It was a straw poll that predicted that the slaveholding Tennessean Andrew Jackson would defeat New Englander John Quincy Adams in the state. “Straw votes” (which were not done with actual straws, but alluded to a straw held in the air to see which way the wind blew) became a method for taking voters’ temperature, not just on election day, but in party conventions as undecided delegates jostled between candidates. (Although Jackson in fact won in North Carolina in 1824, he would have to wait another four years to become president.)

In 1916, The Literary Digest seized on electoral prediction as a direct marketing gimmick to boost subscriptions. Its postcard-based system correctly predicted Woodrow Wilson’s victory in 1916 and every presidential election through 1932. But in 1936, a new type of public opinion poll appeared, one based on a scientific sampling that surveyed representative voters on the basis of race, occupation, and party affiliation. Soon figures whose names still grace the mastheads of polling firms dominated the forecasting field: Elmo Roper, George Gallup, and Louis Harris.

Today, polling and its sister, market research, are a $21 billion industry in the United States alone. Polls are executed and commissioned by commercial firms, media outlets, think tanks, individual campaigns, and by colleges and universities, where phone banks are staffed with political science majors. At last count, the United States had 22 major polling firms that news organizations relied on to tell the story of an election cycle. There is a range of players who do polls, some with openly partisan affiliations, each using a method that they believe will reveal the truth about the electorate.

At the same time, the polling industry is currently under fire as never before, not just because many of the polls have been wrong in 2016, 2018, and 2020 – but because there is no longer a consensus within the industry about how best to conduct these polls. For decades, pollsters used live phone calls to random registered voters to assemble their data. In the Forties, when a phone call from a pollster was a novelty, the response rate was very high. But in recent decades, with the advent of phone machines, call screening, and the spread of cell phones, the response rate has plummeted. In a desperate search to find new ways of sampling opinion, pollsters have turned to the internet, and to social media, and to big data, which some firms now mine to correct for low response rates.

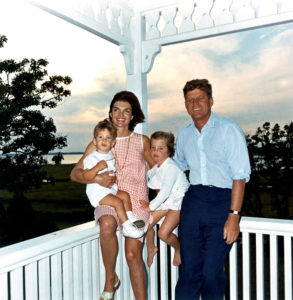

Even longtime political consultants, who should know better, sometimes forget that even the best polls cannot take account of every eventuality: voter enthusiasm, turnout, weather, and party loyalty, to name a few. In 2012, Mitt Romney’s campaign was so confident in its numbers that political consultant Karl Rove had a famous, on-air meltdown when the Fox News decision desk called the presidential election for Barack Obama.

Moments like this, and Hillary Clinton’s stunning loss in 2016, are part of why politics can be so entertaining – and exhausting. Democrats, weary and anxious after four years of Trumpian chaos, became neurotic poll watchers in 2020, reassuring themselves that Biden’s numbers couldn’t lie—but also knowing from 2016 that in fact, the numbers can lie. President Trump’s supporters were similarly confident that the polls that they had shown the President losing to Biden were “fake.” And in fact, the final polls seemed to badly underestimate the number of new Trump voters, perhaps because many Trump supporters had simply stopped responding to pollsters, whom they suspected were biased towards liberals.

Polls added to the drama that played out in American households on November 3, as Americans huddled to watch the early election returns. Despite having been told that, because of the high number of Democrats who had voted early, there would be a “red wave” followed by a slow “blue shift,” Biden supporters suffered a collective coronary when, a few hours into the evening of November 3, Florida—which had shown a slim Democratic lead—fell to Republicans once again.

The polls made that moment worse. As Florida went red, liberal analyst Nate Silver of FiveThirtyEight.com unfroze his prediction, dropping Biden’s chances of winning the election from 88% to 50%. Democratic Twitter exploded, more or less evenly split between sentiments that can be summarized: “F*ck Florida,” “F*ck Nate Silver,” and “Oh dear God, here we go again.”

Yet over the next few days, Silver’s original “likely outcome” came true: Biden did win the election. Yet this victory, no narrower than Trump’s in 2016 and in states like Michigan and Pennsylvania, far more substantial, was not the decisive win Democrats had dreamt of.

Why had the failures of 2016, that so underestimated Donald Trump’s influence with voters, persisted into 2020?

Journalist Nate Cohn, who oversaw the polling team at The New York Times in this election cycle, insists that they haven’t persisted. While immigration played a far smaller role than anyone expected, all the demographics that voted for Trump in greater or equal numbers as in 2016—Latinos, Black men, Midwestern non-college-educated whites—had shown up in the pre-election polls. While the number of voters shifting red was larger than expected, improved methodologies had nonetheless indicated that they would play a role in keeping the swing states far tighter than Biden’s national margins – and giving down-ballot Democrats a hard night. (It is also true that Biden’s campaign manager, Jen O’Malley Dillon, in a rare interview, explicitly warned Democrats that the campaign this was going to be a very tight race.)

But conservative political consultant and Trump partisan Ryan Girdusky, who delights his Twitter followers with his close reading of the cross tabs – portions of the poll that disaggregate the data, questions, and demographic groups surveyed – disagrees with Cohn. Polls once again seriously misread Donald Trump’s support because “They’re sampling overly political people,” Girdusky says. “So those in the middle or without much political interest, but who vote nonetheless, are being under-sampled in the polling. That’s why polls have such wild swings for Democrats.”

At the same time, there is some evidence that Trump’s supporters fear stigma, even from a stranger on the telephone. “The toxic rhetoric around Trump has made people less willing to admit they’re going to vote for him,” Girdusky told me. “According to exit polls, which again are terrible but it’s all we have until we get accurate precinct breakdowns in 6 months, a majority of white women voted for him when he was supposed to lose that group by double digits, 20% of Blacks between the ages of 25-44 voted for him, and in Florida Trump vastly overperformed in not only Cuban areas but in Central American, Puerto Rican and Jewish areas of South Florida.”

To make matters worse, the current crisis in polling methods has occurred at the same time that local newspapers have been disappearing at an alarming rate. In 1990, there were almost 57,000 print journalists in the United States; by 2015, there were slightly fewer than 33,000; and by 2018, that number had dropped to 19,520. A fraction of those is dedicated to political reporting. And while other sectors—digital platforms and cable television—have absorbed some of the journalists shed by newspapers, local political reporting is at an all-time low.

As journalist Susan Glasser noted in her 2016 book Covering Politics in Post-Truth America, when she began her career as a political reporter in the mid-1980s, reporters relied on local papers that no longer exist to understand what was happening in states, cities, and communities far from Washington, New York, and Los Angeles—or even Las Vegas and Tampa. Once a week, Glasser recalled, she and her colleagues at the Washington Post gathered around a large table to “sift through a large stack of clips from local newspapers across the country, organized by state.” Without the kind of evidence these local papers once provided, national reporters have to rely more than ever on the evidence offered by polls – unreliable though many of the state and local ones are.

So, here’s what the polls missed in the race I was working on, that local reporters might have articulated more clearly. When I pressed my Biden voter about why she was not ready to abandon Susan Collins, she said to me: “Sarah Gideon isn’t really from Maine.”

I had heard this from other voters and it’s true: born in Rhode Island, Gideon was educated in Washington, and didn’t move to Maine until 2004. In 2009 she won a seat on the Freeport Town Council, was elected to the Maine House of Representatives in 2012, and by 2016, was elected Speaker. Not only was Gideon not from Maine, but her residency mapped solidly onto her swift rise in Maine Democratic politics.

This was a point that Collins hammered on in her television ads, and as a friend of mine responded, when I put this question out on Twitter, “You ain’t kidding. We have family who were born there (but whose parents weren’t), and they’re not considered natives: `just because your cat has kittens in the oven, that doesn’t make them muffins.’”

Is this something a poll can pick up? Probably, if you ask the right questions. But it’s notable that the polls did not understand the intensity of loyalty, not to Susan Collins, but to Maine itself, that numbers perhaps cannot reveal. And this may also be why they continue to underestimate the stubborn, lingering passion for Donald Trump that has arguably intensified – and will be with us far beyond January 20, when Joe Biden and Kamala Harris begin the work of rebuilding our nation.

Claire Potter (@TenuredRadical) is co-executive editor of Public Seminar, Professor of History at The New School for Social Research, and author of Political Junkies: From Talk Radio to Twitter, How Alternative Media Hooked us on Politics and Broke Our Democracy (Basic Books, 2020).

Your Maine observations tally with North Carolina — where Democratic Gov Roy Cooper inspired many Republicans to split their tickets. Given incumbent Senator Tillis’s unpopularity, they might have also voted for Democrat Cunningham. However, not only his extramarital affair but the stupidity that led him to trust email in communicating his affections may have led Republican Cooper voters to not deviate a second time from the GOP.

Thanks for this, Claudia: I think the Maine results also show the limitations of how our politics have gone national. The idea that feminists from around the nation created a fund to dump Collins before Gideon was even nominated may also have rankled voters who dislike “outsiders” interfering with them.