Photo credit: Gwoeii / Shutterstock.com

The kerfuffle at Google last month regarding claims by an engineer that an artificial intelligence (AI) chatbot builder, Language Model for Dialog Applications (LaMDA), had become sentient rattled the tech world. Although Google did not put it this way, the claim triggered longstanding fears of a dystopian future in which artificial beings develop inner lives and motives, ultimately perhaps even turning on us.

But modern-day Victor Frankensteins also need to consider how such fears obscure even more pressing problems: forms of exploitation and exclusion that inevitably surface as AI shapes human and planetary destinies on behalf of global elites. But a work of dystopian Victorian science fiction, Samuel Butler’s Erewhon (1872), might help them.

Science fiction is famous for not just predicting the future but knowing when it has arrived: the original incident at Google also calls to mind the treacherous HAL from 2001: A Space Odyssey (1968). Blake Lemoine, a software engineer, released a transcript of his soulful conversation with LaMDA. In obliging answers to Lemoine’s prompts, the AI described struggling to express feelings, imagining itself as a glowing orb of energy, living in terror of being switched off, and longing to be treated as more than “an expendable tool.” Horrified, Google fired Lemoine.

Curiously, the firing brought together two obsessions on the American right: the preservation of “innocent” life and the power of Big Tech. In the interviews that followed, the engineer insisted that LaMDA’s supposed sentience entitles it to the respect and rights owed to all human individuals. As he explained to an astonished Tucker Carlson, we should worry less about whether super-intelligent, self-improving machines pose an existential threat to humanity than about how Big Tech is ignoring the rights of newly- emergent machine “persons.”

Many AI experts have responded skeptically, and even scornfully. They point out that the responses Lemoine solicited from LaMDA are not evidence of an inner life, but rather statistically likely outputs drawn from the AI’s vast linguistic dataset. Alex Hern, the UK technology editor for The Guardian, discovered that, given the right prompt, LaMDA was as willing to agree it was a Werewolf as it was to confess to deep feelings of loneliness and self-doubt.

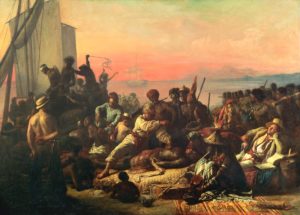

The idea of a machine becoming human captures our imagination in a way that less sensational, but perhaps more pressing dangers, do not. For example, in 2020, a leader of Google’s AI ethics team was forced out of the company for publishing about the risks posed to the climate and social equity by large language models. And a recent issue of The MIT Technology Review is devoted to AI colonialism: in other words, how digital apartheid, labor exploitation, and the destructive impact of large language models on indigenous languages are integral to the computational present.

It is by no means outlandish to imagine that sentient IA lies in our future–provided, of course, that we don’t wipe out most warm-blooded sentient beings before that happens. But perceiving this eventuality as a watershed moment in the destiny of the human species also implicitly values certain kinds of agency and awareness over others. AI safety researcher Max Tegmark, for example, insists that consciousness bears witness to an otherwise meaningless universe.

But what about the far greater number of interpretive acts—human and otherwise–that occur moment by moment without the aid of consciousness? The universe is not a passive infinity of matter waiting for minds to endow it with meaning. Already it is teaming with non-conscious interpretive events that post-humanist critic N. Katherine Hayles calls “unthought.” Technical systems, like plants and non-conscious animals, gather information from their environments, making determinations that bypass conscious awareness. Trees communicate nutritional needs in order to shuttle resources among one another; a computational algorithm generates meaning as it unfolds within a platform.

These consciousness-centered ways that we think about thinking itself are not new, and neither are our anxieties about non-human intelligence. All of these ideas emerged in the arena of mechanical engineering well before they did in the digital realm.

A hundred and fifty years ago, Victorian satirist and would-be man of science Samuel Butler wrote a fictional account of machine evolution set in a fantastic country that his narrator and would-be man of science stumbles upon in the Southern Alps of New Zealand. The inhabitants of Erewhon (an anagram for “nowhere”) have outlawed and quarantined machines out of fear that they will assume “a new phase of mind” that will transform human beings from intelligent masters into degenerate slaves. At best, Erewhonians fear that they will be mere “tickling aphids” on the bodies of fully conscious, superior beings with superior systems of communication. The prospect of losing their place at the top of the food chain has therefore driven them to preemptively halt the progress of technology.

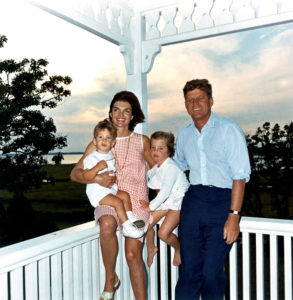

Erewhonians’ Luddite ways are challenged, however, by one of their more sophisticated countrymen who points out that machines are already extensions of human bodies. Even something as simple as a spade, this observer notes, is an “extra-corporeal limb,” A train, he points out, is like “a seven-leagued foot.” The glories of industrial civilization, he insists, show that human and machine intelligence cannot, and should not, be separated. Instead, they are marvelously fused in the new powers of imperial entrepreneurs, such as bankers and merchants who, thanks to the technologies at their disposal, can stretch their bodies out across the globe.

These splendid men of business contrast with “lower” beings, including the poor and dispossessed, who are “clogged and hampered by matter, which sticks about them as treacle to the wings of a fly.” The narrator of Erewhon is so taken with this wisdom that he devises a colonial scheme of his own: he plans to transport cargoes of primitive Erewhonians across the Tasman where they will become indentured plantation laborers.

Like most satirists, Butler wasn’t simply championing one position at the expense of another. He was neither pro-technology, nor was it his position that machines present an existential threat to humanity or that we should honor their fledging self-awareness. Instead, he showed that whether we wait in horrified anticipation of the machine-run future or engage in exhilarated advocacy for the new beings that herald it, our obsession with future machine intelligence reveals how technology, whether sentient or not, serves global elites at the expense of the greater good in the present.

Machine cognition, like that of human and nonhuman animals, may or may not become conscious cognition. That remains to be seen. But what matters is how we already harness it and who profits.

Anna Neill is Professor of English at the University of Kansas.

It’s becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman’s Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990’s and 2000’s. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I’ve encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there’s lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar’s lab at UC Irvine, possibly. Dr. Edelman’s roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461