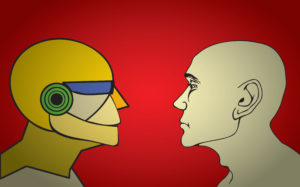

Image credit: Sansoen Saengsakorat / Shutterstock

The following is an excerpt from an essay first published in Social Research: An International Quarterly. It is part of the journal’s issue Frontiers of Social Inquiry.

AI ethics is not really a distinct field or coherent discourse, but more of an amalgamation of different perspectives considering the potential implications of automated systems and algorithms making decisions with consequential impacts on human lives. Indeed, it may even be a misnomer insofar as many of the concerns are matters of social value or justice, rather than individual ethics. But there is value in considering how and why different stakeholders have developed different conceptions and labeled them AI ethics in order to suit their interests, as well as the ways in which these conceptions have illuminated important questions in the social critique of technology more generally. Indeed, I believe the divergent visions of AI ethics is the best explanation for the success of the term and the activities performed under it, despite its overall incoherence. The various stakeholders all have different commitments to ethical theories (mostly Western), as well as differing political and economic interests in AI technology and its application. These have mostly fixated on a few of the key social problems raised by AI, as well as efforts by technology companies to avoid regulation.

Historically, AI ethics also had its roots in machine ethics and robot ethics (Asaro and Wallach, 2017). The earliest work in AI ethics was primarily concerned with abstract philosophical problems in metaethics, such as whether computers could be programmed to make ethical decisions, or whether ethical theories like utilitarianism are computationally tractable, or whether sufficiently complex or capable AI systems should be considered to have rights. Within software engineering more generally there was software ethics that grew directly out of the tradition of engineering ethics described above. This mostly dealt with managing “safety critical” software systems that could directly impact human lives and to some extent with growing concerns around data privacy and system security. Principles emerged such as warnings that software that was unstable or imprecise should not be entrusted with human lives (such as medical systems, flight control systems, nuclear power plant control systems, and other safety critical systems) or that systems that contained personal or valuable information should have security mechanisms in place to prevent unauthorized access to such data (such as security by design).

These issues came more into public awareness in the 2010s with the promise and testing of self-driving cars, in which software is used to control technologies already responsible for killing tens of thousands of people every year and as data breaches rendered more and more people’s information vulnerable to identity theft and other harms. Such concerns led to the revival of an old tool from meta-ethics, the trolley problem (Cowls, 2017), a thought experiment on whether it is morally better to act to save several people by killing one person or to simply allow several people to die through inaction. It was also used to show that your moral intuitions could be easily shifted or manipulated by adding information to the situation—whether to save a young person over an old person, or a “good” person over a “bad” person, and such. Though the new applications of the trolley problem were largely a distraction and misunderstanding of the original purpose of this philosophical thought experiment, it raised awareness that autonomous systems making decisions based on algorithms would have to make ethically difficult decisions and that we should have public and professional discussion of how those decisions should be made and establish policies to regulate the process.

In 2013, Google entered discussions to acquire the UK-based machine learning company DeepMind. One stipulation of that acquisition, insisted on by DeepMind’s three founders, was the creation of an internal ethics review board within Google (Legassick and Harding, 2017). While few details about this review board have been made public, it was intended to be an integral part of Google, not just the DeepMind subsidiary. The only leaked information about the board as of 2017 (Hern 2017) was that it had convened, was bringing ethicists from other areas of science and technology “up to speed” on advances and future directions of AI technology, and included Nick Bostrom, the Swedish author of Superintelligence (2014). These tidbits suggest that the board was tasked primarily with considering the ethical issues stemming from advanced AI, AGI, or superintelligence, and the long-term threats to humans from creating an AI that is autonomous and beyond human control. This is somewhat ironic because Google was by that time already one of the pioneers of surveillance capitalism, and using data to target advertising to users of its search engine was its main business. The ethics of doing this would likely not be a topic for discussion by the ethics board.

A great deal of digital ink has been spilled discussing the potential long-term consequences of AI and the almost mystical properties of AI that transcend human intelligence (Asaro, 2001). Some people working in AI ethics argue that these are the most important ethical issues because AI could lead to the end of human civilization or to AI-beings that go forth to colonize the solar system, the galaxy, or beyond (Bostrom 2014; Russell 2019). These ideas have gained a great deal of traction in Silicon Valley more generally. Most AI ethics researchers, however, are more concerned with near-term risks to society, jobs, and individuals. Indeed, the focus on far-off consequences, or “longtermism,” is largely a distraction from the already existing threats to human rights, fairness, justice, equality, and safety, and other more immediate, tangible, and avoidable risks. In this sense, longtermism is itself a form of “ethics washing” because it leads to avoiding consideration of these pressing issues in favor of considering problems with little impact on current business practices.

While the creation of such an ethics board at Google was innovative at the time, the secrecy around it was puzzling. One of the main functions of such boards, like institutional review boards for scientific research, is both to avoid potential legal liability in case things go wrong and to assure the public and/or customers and/or investors that there is meaningful oversight of any potentially harmful research, as well as to put in place internal processes for reducing the risks of doing such work. However, for it to fully function in these ways, particularly to build public trust, some degree of transparency is required, and even better would be public announcements of some major decisions made by the board. At the very least, an announcement of who is on the board could build confidence based on the reputation of the members, even if their actual authority and power are unknown or limited. Other companies followed suit in creating such boards, with some being more transparent, like the Texas startup Lucid.AI. Other Big Tech companies like Microsoft created AI ethics groups in 2017, only to disband them in 2023 (Belanger, 2023), while Meta created an oversight board for ethical questions around content management on its social media platforms Facebook and Instagram (Wong and Floridi, 2023).

After that, a series of companies issued “AI ethics principles.” These were mostly abstract lists of ethical principles that companies touted to assure customers and the public that they would only build useful and beneficial AI and would avoid building anything that might harm society. Google led this trend with a set of principles that were released during an employee protest over the company’s participation in Project Maven (Google n.d.), a Pentagon military project to use AI to analyze the vast quantities of video data collected by drones in the US military operations in Iraq and Afghanistan. Accordingly, Google’s principles included a principle to not develop “weapons” but did not go as far as to say they would not work for militaries nor make an effort to keep their technologies from being used as a component in some future weapon or in helping choose targets for weapons (Godz, 2018; Suchman, Irani, and Asaro, 2018). Other companies followed suit, and within a few years, there were well over 100 sets of “AI ethical principles” (Winfield, 2019).

These sets of principles served the interests of technology companies to “ethics wash” their images and products by showing that they cared about developing the technology ethically. However, similar to the establishment of the ethics boards, there was little transparency about how the rules would be applied or interpreted. Even Google’s CEO would not answer whether Project Maven met the criteria for being a component of a weapon system given the stated principle not to work on weapons, and while Google’s leadership claimed they would not renew the Pentagon contract, they did not cancel it but continued working on the project for some time, as perhaps they still are. Intrinsically, any set of ethical principles, like the engineering codes of conduct, are abstract and require interpretation when being applied to concrete problems and cases. They are also nonbinding and unenforced; no one can really review how they are being applied or hold companies accountable if they are violated. Moreover, they do not really offer much guidance on how to design software or what kinds of considerations should influence design choices. More recent efforts by standard-setting bodies of professional organizations like the Association of Computing Machinery (ACM) and the Institute of Electrical and Electronics Engineers (IEEE) developed more robust ethical principles meant to guide programmers and engineers working on AI and software projects, but these too are non-binding (IEEE 2017; ACM 2018).

The ethics washing efforts were clear attempts to avoid any real legally binding regulation of the industry. There is yet no such regulation in the US, and the EU is just implementing its first attempt at creating a dedicated AI regulatory framework, while a few AI-related clauses exist in previous regulations such as the EU’s General Data Protection Regulation.

The other thread of AI ethics that emerged in the 2010s was a series of technological critiques and sociological analyses of technology that examined its differential effects on different people and groups. Namely, many technologies were being developed that imposed draconian social policies on the poor, discriminated against already marginalized groups based on race, age, class, gender, skin color, or religion, or otherwise showed biases in their effects on different groups. We can describe the various efforts in this thread as aiming for “data justice” or “algorithmic justice.” Examples of this include the ways the implementation of AI and algorithmic technologies can be racist, sexist, or classist (see Asaro, 2016; O’Neil, 2016, 2023; Eubanks, 2017; Hicks, 2017; Noble, 2018; Benjamin, 2019). These trends were further explored in a more technical way through a series of annual ACM conferences on fairness, accountability, and transparency in algorithms that began in 2018. These conferences examined the ways existing social biases in datasets are replicated, amplified, and exacerbated by learning algorithms, and looked into developing strategies to detect, eliminate, and minimize these biases. For example, an algorithm for determining the salary to offer a new hire might be based on past salaries, which we know tend to be less for women than men and less for women of color than for white women. Such algorithms might then identify the gender and race of a job candidate and, based simply on these characteristics, offer them a lower salary than they would offer another candidate with other qualifications being the same. The algorithms would be simply identifying the historic trend and continuing it going forward. How to get them to not do that is a difficult and important area of research.

Peter M. Asaro is the cofounder of the International Committee for Robot Arms Control and the Campaign to Stop Killer Robots and an associate professor in the School of Media Studies at The New School.

2 thoughts on “The Rise of AI Ethics”